Businesses are quickly adopting LLMs in their business operations, leveraging them within various business processes, as they can efficiently handle tasks such as drafting reports, summarizing data, and answering questions. Teams see huge potential to save time and improve efficiency.

At the same time, there’s caution. Unless properly managed, LLLMs can sometimes give wrong answers (hallucinations), jeopardize sensitive data, or even disrupt existing workflows.

That is why the companies require a controlled approach. Using AI for business processes in a structured, secure way ensures LLMs help teams without creating data or process chaos.

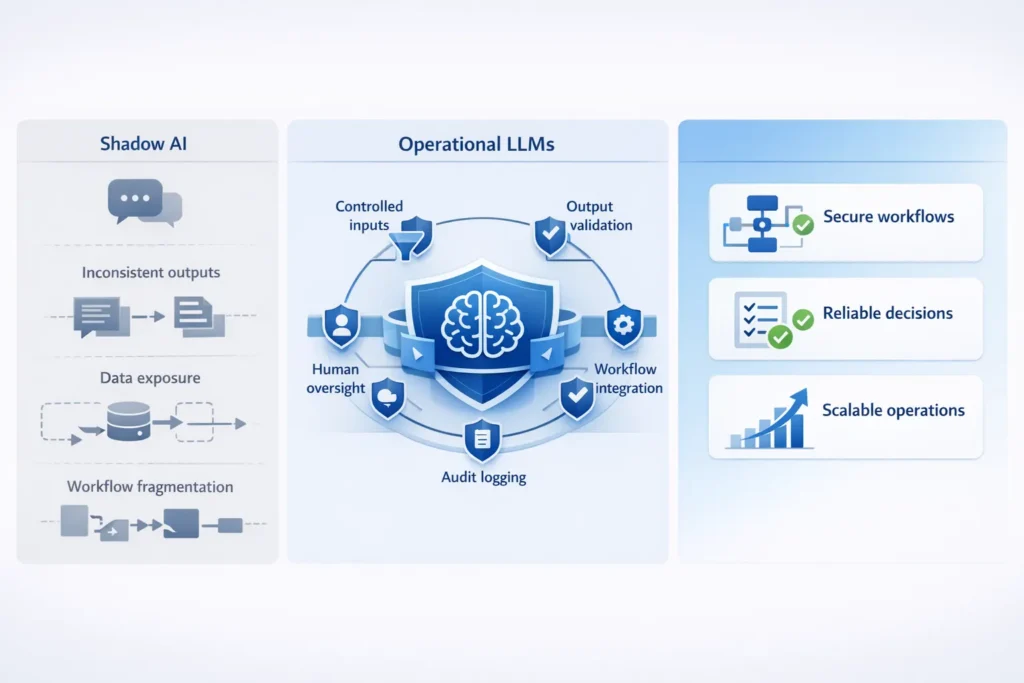

Shadow AI tools appear when the employees begin to use LLMs independently. They are applications or prompts that are not being officially managed by anybody, thus making them confusing and inefficient.

Outputs can be inconsistent, leading to mistakes or risky decisions. One person’s AI result might differ from another’s, making it hard to trust the work.

Using public LLMs without proper controls can expose sensitive business data. Confidential info might be shared outside the company without realizing it.

Instead of speeding things up, unstructured LLM use can fragment workflows and slow teams down. AI without data risk is possible, but it needs careful, controlled AI implementation to work safely and reliably.

Not all AI is ready for business use. Experimental LLMs are great for testing, but production-grade AI is built to work safely and reliably in real operations.

Operational LLMs are embedded inside workflows instead of being standalone chat tools. They help automate tasks directly where work happens, like approvals, document summaries, or data checks.

These systems often mix rules and AI together—deterministic + AI hybrid to provide consistent, predictable, and safe outputs for businesses. Companies can deploy them for enterprise LLM use cases or other operational AI use cases without concern for error or chaos.

LLMs shouldn’t make critical approvals without checks since errors at this stage may be quite expensive.

They should not post financial transactions without due validation or they should not make compliance decisions without any human review.

These boundaries are key to safe use. Strong AI governance for business ensures LLMs help teams without creating risk.

When using LLMs in business, treat them as a layer on top of your existing systems and not the system of record. They should assist workflows without replacing the trusted sources of your data. Many organizations address this by using enterprise-ready AI and LLM integration services that ensure models are securely embedded into business workflows rather than used as disconnected chat tools.

It’s important to have controlled inputs and outputs. It implies that only approved data goes into the AI, and results are checked and/or filtered prior to use. It eliminates errors and safeguards confidential data.

Tech like retrieval-augmented generation (RAG) allows LLMs to pull answers from your own internal documents or databases, rather than guessing or relying on public information. This makes outputs more accurate as well as relevant to your business.

Deciding between private models and public APIs is crucial. Private models keep sensitive data in-house, while public APIs can be useful for general tasks but may expose confidential information if not carefully managed.

Finally, implement audit logging and traceability. Every action the AI takes should be recorded, so you can track decisions, fix errors, and stay compliant.

Following this architecture ensures safe use of LLMs in business and builds strong AI governance for business and keeps workflows secure, reliable, and efficient as well.

Use role-based access control so only the right people can interact with LLMs. This keeps sensitive information safe.

Create prompt templates and constraints to guide the AI. It helps avoid mistakes and to ensure consistent outputs as well.

Sanitize inputs before sending them to the model. Remove any data that is either confidential or irrelevant data to reduce risk.

Check AI results with output validation and confidence scoring. This assists teams in trusting the answers and identifying mistakes promptly.

Consider data residency, where your data is stored matters for security and compliance.

These practices demonstrate how companies use LLMs securely as well as enable AI without data risk.

LLMs can be integrated into approval workflows to help draft summaries, identify possible issues, or suggest next steps. This makes things quick yet humans are in charge.

AI should be used as a decision support rather than a replacement. It provides insights and suggestions to get teams to make faster and smarter decisions without depending on the AI alone.

Human-in-the-loop automation is key for safety. People review critical outputs before final approval, thus ensuring errors are caught and workflows remain reliable.

LLMs can also trigger workflow actions automatically. For example, they can assign tasks, update records, or send notifications, which reduces manual effort and keeps operations smooth.

These methods show how AI workflow automation and operational AI use cases turn AI into real, repeatable business value.

LLMs in business operations can make it more efficient by expediting workflows, reducing errors, and supporting smarter decisions but only when they are structured and managed carefully.

Chaos often comes from ungoverned experimentation such as employees utilizing different/random tools or sharing sensitive data. This may hinder team productivity, rather than helping.

With appropriate guidelines, monitoring, and human oversight, LLMs are being treated by smart companies as part of their infrastructure and something that can really add value while minimising risk.

© 2025, Data Inseyets-All Rights Reserved.